NTT Overcomes the “Vocabulary Barrier” Between LLMs

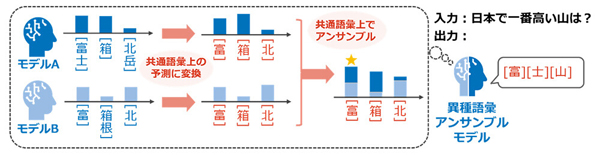

NTT has established the world’s first inference technology that reduces the vocabulary of “tokens,” the input/output units in LLMs, without degrading accuracy, enabling the commonality of token vocabulary across different LLMs.

Previously, achieving inference-time collaboration, such as ensemble modeling, using multiple LLMs required the token vocabulary of each LLM to match. Ensemble modeling is a classic collaboration technique that aggregates probability values of output candidates from multiple models and produces an output that the models can agree on.

The newly developed technology eliminates this constraint, enabling diverse inference-time collaboration, including ensemble modeling and NTT’s proprietary portable tuning, which were previously difficult to achieve between any heterogeneous LLMs, thereby realizing higher accuracy through knowledge integration and transfer.

Portable tuning is NTT’s proprietary technology that allows the effects of specialized learning to be applied to new base models without retraining, by linking a pre-trained reward model with a base model.

※Translating Japanese articles into English with AI